This is a brief article to summarise some recent developments relating to my ‘Math Function Unit Testing design pattern’.

Further to an article I posted in October 2021, Unit Testing, Scenarios and Categories: The SCAN Method, I have updated two unit testing utility packages to integrate the category set concept explored there.

The Powershell utility, Write-UT_Template, has also been extended to generate a template scenario for each scenario listed in a new CSV input file. In addition, the Powershell package has new functions for automation of the unit testing steps both for scripting languages and for Oracle PL/SQL.

Experience with the SCAN method has led to two extensions that simplify its application:

- Introduction of a new visualisation, the Category Structure Diagram, for example:

- (Mostly) 1-1 mapping between categories and scenarios

The project README files have also been reworked, in particular with updating of the Usage sections to centre around the three main steps in the design pattern.

These changes can be seen in the two projects above, and have also been applied in the Oracle projects:

Here is the background section from the first of the latter two projects:

Background

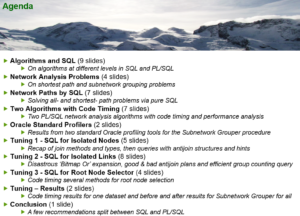

I explained the concepts for the unit testing design pattern in relation specifically to database testing in a presentation at the Oracle User Group Ireland Conference in March 2018:

The Database API Viewed As A Mathematical Function: Insights into Testing

I later named the approach ‘The Math Function Unit Testing design pattern’ when I applied it in Javascript and wrote a JavaScript program to format results both in plain text and as HTML pages:

Trapit – JavaScript Unit Tester/Formatter

The module also allowed for the formatting of results obtained from testing in languages other than JavaScript by means of an intermediate output JSON file. In 2021 I developed a powershell module that included a utility to generate a template for the JSON input scenarios file required by the design pattern:

Powershell Trapit Unit Testing Utilities Module

Also in 2021 I developed a systematic approach to the selection of unit test scenarios:

Unit Testing, Scenarios and Categories: The SCAN Method

In early 2023 I extended both the the JavaScript results formatter, and the powershell utility to incorporate Category Set as a scenario attribute. Both utilities support use of the design pattern in any language, while the unit testing driver utility is language-specific and is currently available in Powershell, JavaScript, Python and Oracle PL/SQL versions.

This module is a prerequisite for the unit testing parts of these other Oracle GitHub modules:

Utils – Oracle PL/SQL General Utilities Module

Log_Set – Oracle PL/SQL Logging Module

Timer_Set – Oracle PL/SQL Code Timing Module

Net_Pipe – Oracle PL/SQL Network Analysis Module

Examples of its use in testing four demo PL/SQL APIs can be seen here:

Oracle PL/SQL API Demos – demonstrating instrumentation and logging, code timing and unit testing of Oracle PL/SQL APIs